As the CEO, I am often faced with complex and challenging judgements to make. Even though we are a small business, Frappe is a complex beast. There are 20+ disciplines of work that we do and each one is knowledge-intensive, requiring handling complex situations and making decisions. These vary from engineering and design to sales and support. There are clearly limits to how much one person can understand. One recent challenge I faced was setting benchmarks for excellence for each team and the organization.

Earlier in the month, I had completed quarterly reviews with most of our team members and had my own judgement of how well each team is doing and shared a grade for each team based on my perception. While I tried to do my best to give my ratings, I was confident that my own biases were creeping in. If you have been following us, we at Frappe are strong believers in democracy and we are constantly experimenting with ways of using it in day to day decision making. So on our weekly all hands (Friday Forums) we decided to open the question to everyone in the team and let every member rate each team out of 5 using anonymous polls.

Wisdom of Crowds

There is lots of precedence to such methods. Joe Surowiecki has collected several studies and anecdotes in the book The Wisdom of Crowds showing that often the predictions or judgements made by a group of people are often better than individuals or experts. This is also the basis of a democratic society - that the collective wisdom of the numerous is better than a few. It also stems from the idea that each individual must have equal rights. Here is the classic example:

At a 1906 country fair in Plymouth, 800 people participated in a contest to estimate the weight of a slaughtered and dressed ox. Statistician Francis Galton observed that the median guess, 1207 pounds, was accurate within 1% of the true weight of 1198 pounds. This has contributed to the insight in cognitive science that a crowd's individual judgments can be modeled as a probability distribution of responses with the median centered near the true value of the quantity to be estimated.

Of late, because of the ease in collecting group opinions via the internet, group intelligence has become more and more prevalent. The Economist ran an article on how governments are using crowd intelligence as a way to predict important political events and these methods are often shown to be better than relying on experts.

Conditions for Group Wisdom

Surowiecki lays down 5 conditions for crowds to come to a high quality decision: Diversity, independence, decentralization, aggregation, trust. In our experiment most of these are true:

- Diversity: Frappe has incredible diversity both in disciplines, education and personal backgrounds. On the negative side, most people in Frappe are younger so there could be a small bias on that front.

- Independence: The polls were anonymous and were done remotely. Anonymity frees up people from being judged about their choices and being remote means that people cannot be easily influenced by their peers. Teams in Frappe are also pretty small (mostly under 10) so we can assume reasonable independence in the voting.

- Decentralization: Due to the diversity and remote work, most of the teams are decentralized in Frappe and have their own local sources of knowledge that are different from other teams.

- Aggregation: Live Telegram polls create a fantastic mechanism for aggregation.

- Trust: Frappe has always been a trust-first organization (except when it comes to information security), we have extraordinary levels of transparency in decision making and the financials, pay and other details of the company are openly shared and available.

The Results

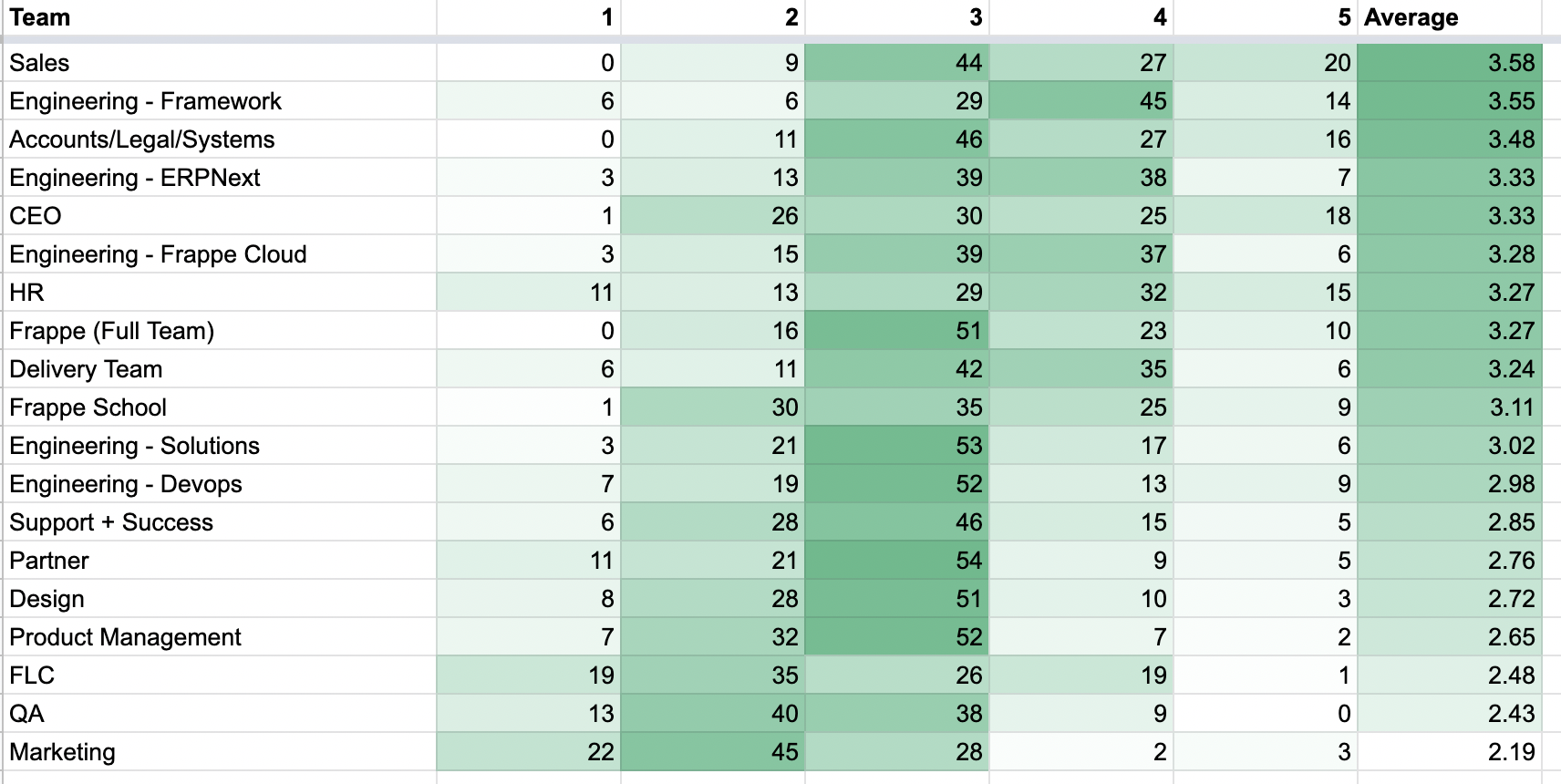

Here are the results! An average 70 people voted for all teams.

My Analysis

- Sales team wins this round, congratulations!! The sales team not only is the engine of the enterprise, but they have recovered from a leadership crisis and a few exits but have still managed to close a reasonable number of deals. It also helps that they are very visible on our public channel celebrating each win.

- The Framework team (our USP as an organisation) comes in a close 2nd. While they have more 4-star ratings, they have a few 1-star ratings. Maybe people have been influenced by some internal ranting on some security practices.

- The systems team has done a fabulous job setting us up for ISO 9001 and 27001 (Quality and Information Security Management Systems) and is reflected in the ratings rounding up the top 3.

- The newer teams, Partner, Product, Design, QA and Marketing are lagging. This is because they are not very visible to the rest of the team and are yet to start delivering at full speed.

- The full team average (3.3) is better than the average of all teams (3.0) indicating that not all teams have equal weight.

- The elected leadership council (Frappe Leadership Council - FLC) has a surprisingly low rating. This was a complete surprise for me. The reason I can think of is that the FLC had proposed a model to kick start new business units and bungled the implementation. There is definitely a scope for introspection and improvement.

- My personal rating as CEO is surprisingly good. Of late I have kind of started being more brutal in my feedback but seems people are getting the message I am trying to give. It is painful work that needs to be done to keep the team moving. Also I think I do pretty okay in communicating to the team and am quite transparent about my thoughts that works in my favour.

Conclusion

One opinion was that this would lead to people caring more about perceptions than reality. My view is that often people don’t share their work and by sharing their successes and being more “out there”. Talking more about their work will not only boost their own sense of well being but also make more people aware of different aspects of the organisation. Like the Buddhists say, everything is a perception and “perception and reality are dependent on each other”. It is much better to suck up to the entire team than just one manager.

I also feel that this kind of feedback feels more objective and easier to accept rather than personal feedback, which justifiably may have biases. It also gives the team a clear way to think about their value to the rest of the team and by extension, all stakeholders. It also throws up interesting opportunities for leadership. Members of lower ranking teams now have the opportunity to turn it around and show it that they can dramatically improve themselves.

In conclusion, this was an excellent experiment, reinforcing my faith in democratic decision making models. We plan to do this “State of the Union” poll on the last standup of every month, thereby giving each team feedback on how well they are aligning to the rest of the team and improving their deliverables.

What do you think? Can this model work, or should we go back to the experts? Watch this space!